How to Optimize Computer Vision Models with Grayscale Images

Machine Learning models, especially in Computer Vision, often require image data as input.

1. Scenario:

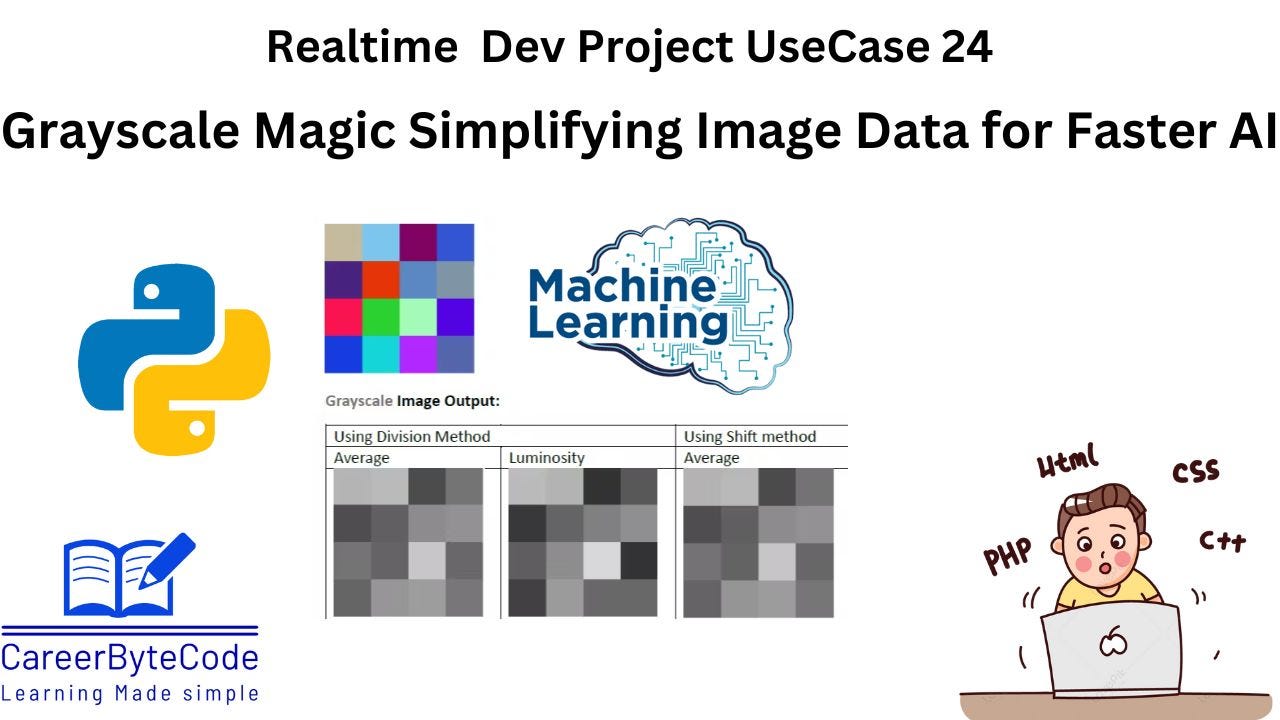

In Machine Learning (ML), grayscale images are often used instead of color images to reduce computational complexity. Many ML models (like CNNs for image classification) do not need color information, as they focus on shapes, textures, and patterns.

2. Why We Need This Use Case

Machine Learning models, especially in Computer Vision, often require image data as input. However, using color images (RGB) increases data size and computational complexity. Many ML models, like CNNs for image classification, focus on shape, texture, and patterns rather than color information. Converting images to grayscale optimizes performance while preserving crucial details.

🔹 Why is it needed in ML?

✅ Reduces Data Size → A color image (RGB) has 3 channels, while grayscale has 1, cutting memory usage by 66%.

✅ Speeds Up Processing → Less data means faster training and inference.

✅ Focuses on Features → Many ML tasks (e.g., face detection, edge detection) work well without color.

3. When We Need This Use Case

When training CNNs for image classification, where color information is not essential.

In face and object detection applications, where structure matters more than color.

When reducing dataset size and speeding up training in resource-constrained environments.

In edge detection, segmentation, and texture analysis tasks.

For medical imaging applications, where grayscale images are commonly used (e.g., X-rays, MRI scans).