Kubernetes Basics: Simplifying Stateless Application Deployment

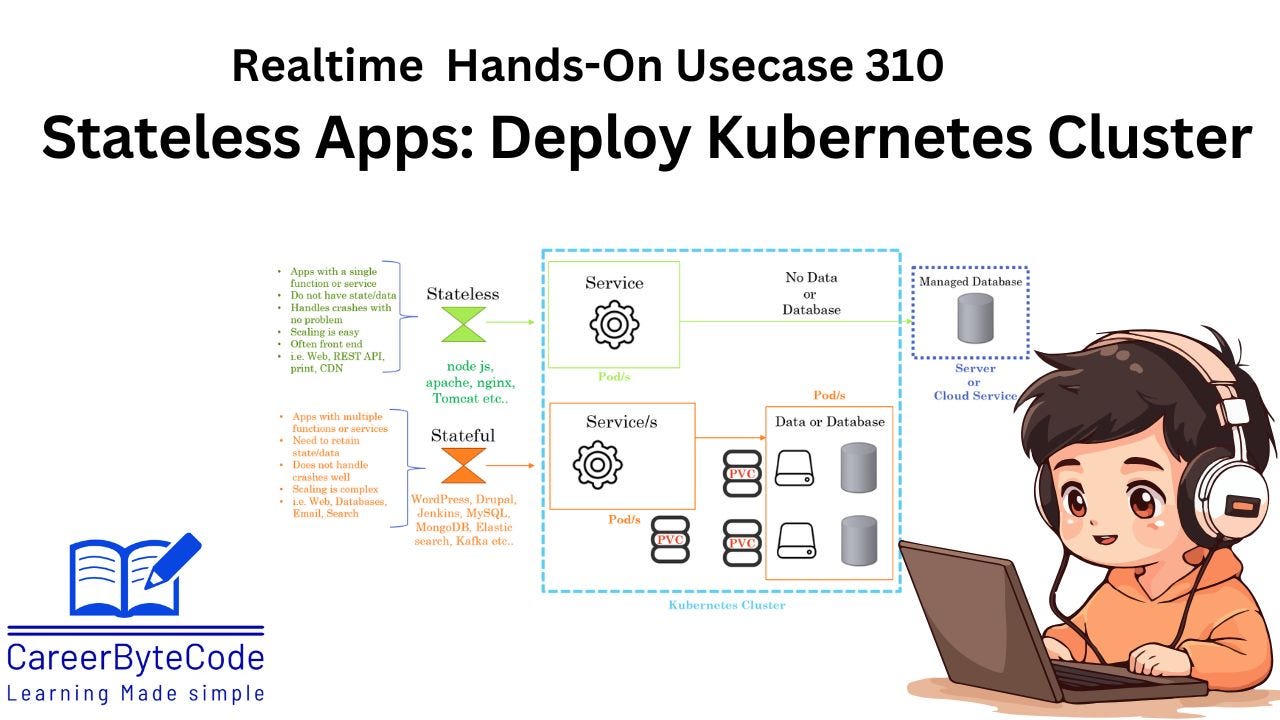

Stateless applications in Kubernetes are a foundational concept, enabling developers to deploy lightweight and scalable applications without worrying about maintaining session state.

1. Why We Need This Use Case

Stateless applications in Kubernetes are a foundational concept, enabling developers to deploy lightweight and scalable applications without worrying about maintaining session state. This approach is ideal for applications like RESTful APIs and static websites. Understanding stateless deployments is crucial for implementing scalable, fault-tolerant microservices.

Deploying stateless applications is one of the foundational practices in Kubernetes. In modern application development, scalability, reliability, and fault tolerance are critical to ensuring that services can handle increasing user demands. Stateless applications, which do not store client-specific data on the server, align perfectly with Kubernetes' architecture by allowing containers to be replicated, scaled, or replaced without disrupting application functionality.

Key reasons why this use case is essential:

Scalability: Stateless applications can scale horizontally by adding more replicas without worrying about data consistency.

Fault Tolerance: With Kubernetes handling replica management, the loss of a pod does not affect the application's performance or availability.

Separation of Concerns: The stateless architecture allows developers to offload data persistence to external storage or databases, focusing on application logic.

Lightweight Deployments: Stateless applications consume fewer resources and have shorter lifecycles, which is ideal for containerized environments.

Cloud-Native Adoption: It introduces developers to cloud-native development principles, an industry-standard approach for building applications.

2. When We Need This Use Case

Deploying applications that do not require session data persistence.

Scaling applications dynamically based on user traffic.

Ensuring fault tolerance in microservices architecture.

Building a reliable infrastructure for RESTful APIs, content delivery, or basic web apps.

Reducing downtime during application updates or failures.

This use case is relevant in scenarios where applications require lightweight, scalable, and distributed architectures. Some specific situations include:

Microservices Architecture: Deploying RESTful APIs, gateways, or microservices that process requests independently.

Content Delivery: Hosting static websites or content where no session or user-specific data needs to be retained on the server.

High-Traffic Applications: Dynamically scaling an application to handle traffic spikes, such as during sales events or product launches.

Decoupled Systems: Applications integrated with external databases, caches, or third-party APIs for data persistence and state management.

Continuous Integration/Delivery: Testing application updates and rollouts in environments where ephemeral and easily deployable services are required.

Fault-Tolerant Systems: Reducing downtime by running multiple replicas of an application that can seamlessly take over if a pod fails.